Getting Started

Select from the following guides to learn more about how to use OpenLLMetry:Start with Python

Set up Traceloop Python SDK in your project

Start with Javascript / Typescript

Set up Traceloop Javascript SDK in your project

Start with Go

Set up Traceloop Go SDK in your project

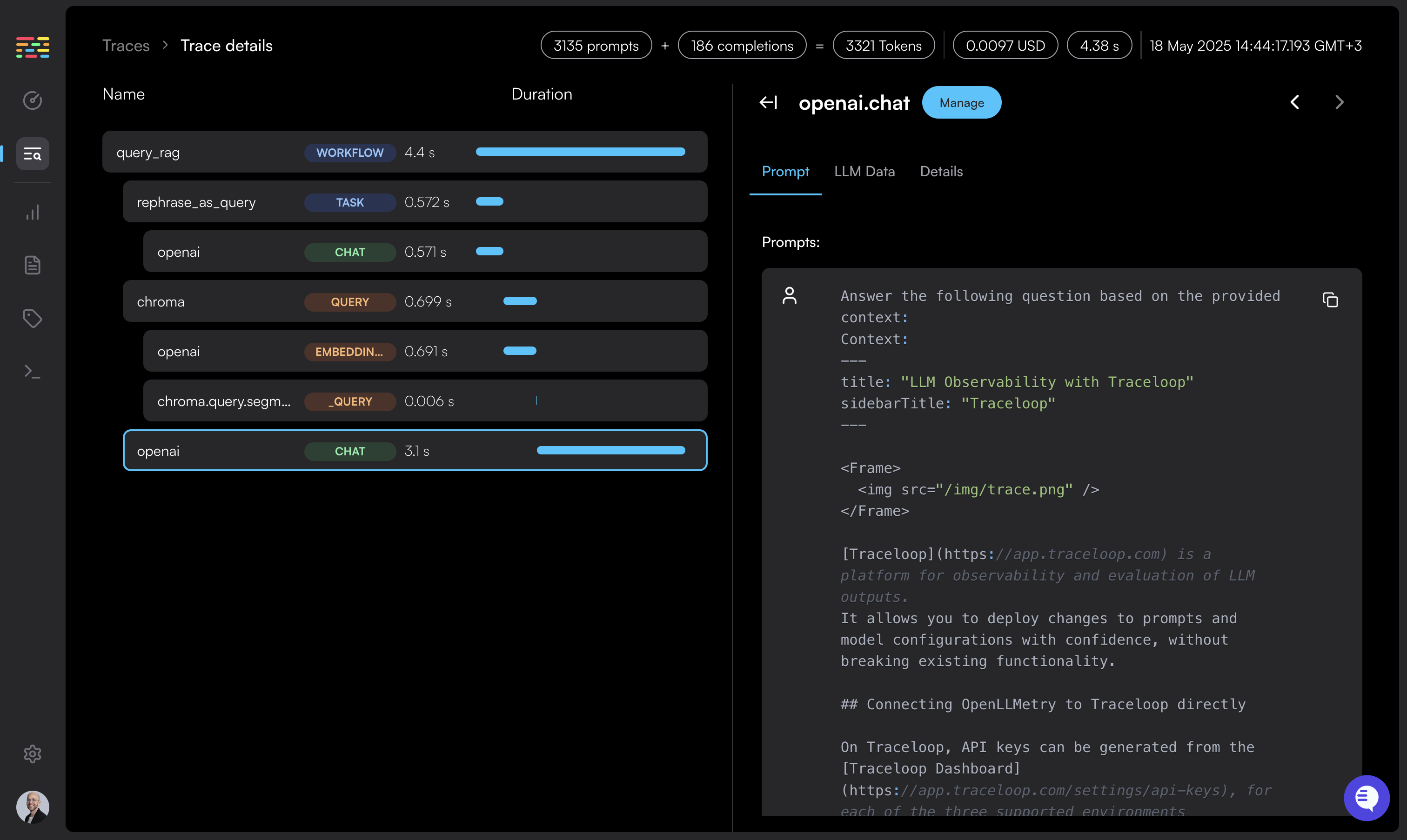

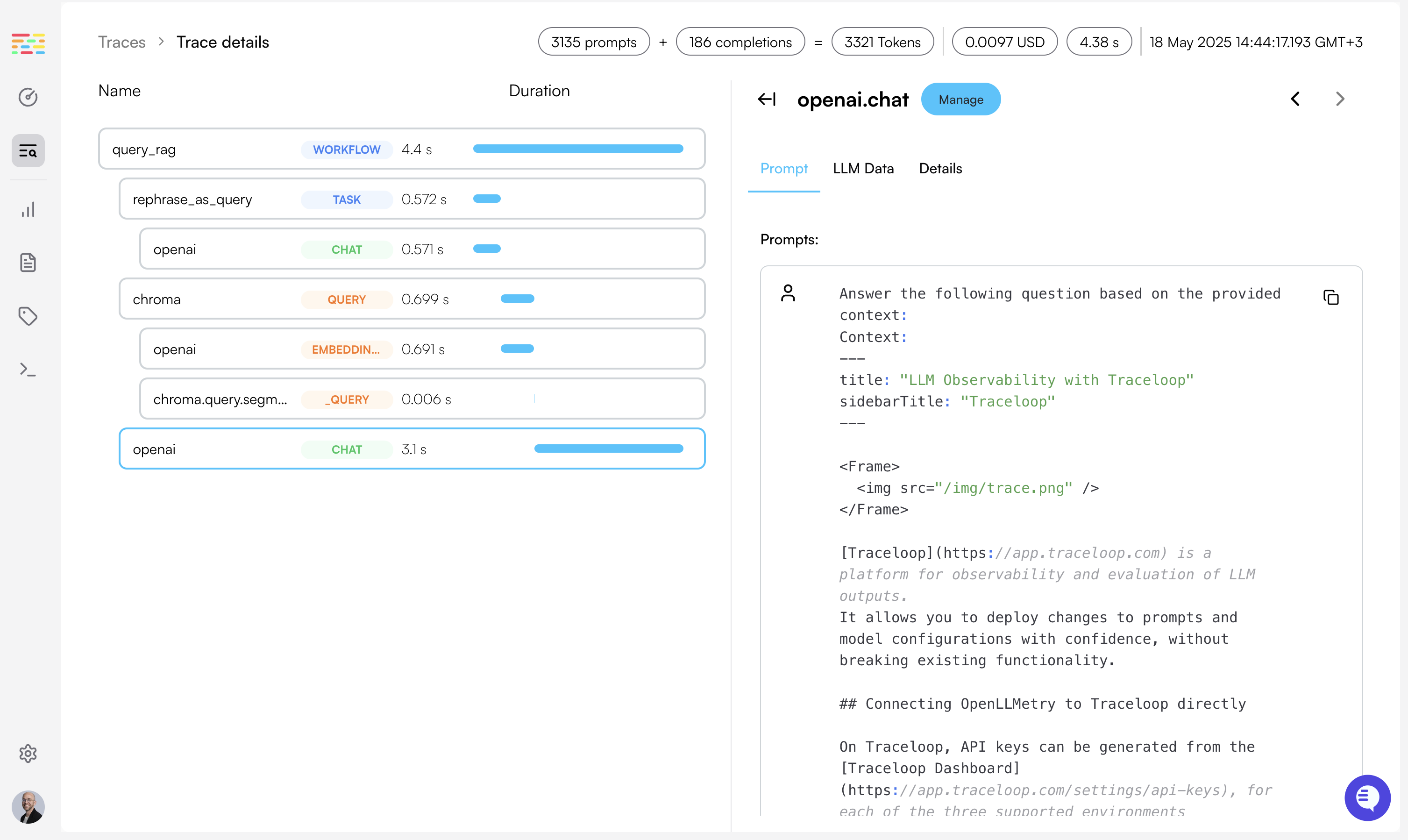

Workflows, Tasks, Agents and Tools

Learn how to annotate your code to enrich your traces

Integrations

Learn how to connect to your existing observability stack

Privacy

How we secure your data